$ sudo apt-get install linux-source linux-headers-generic

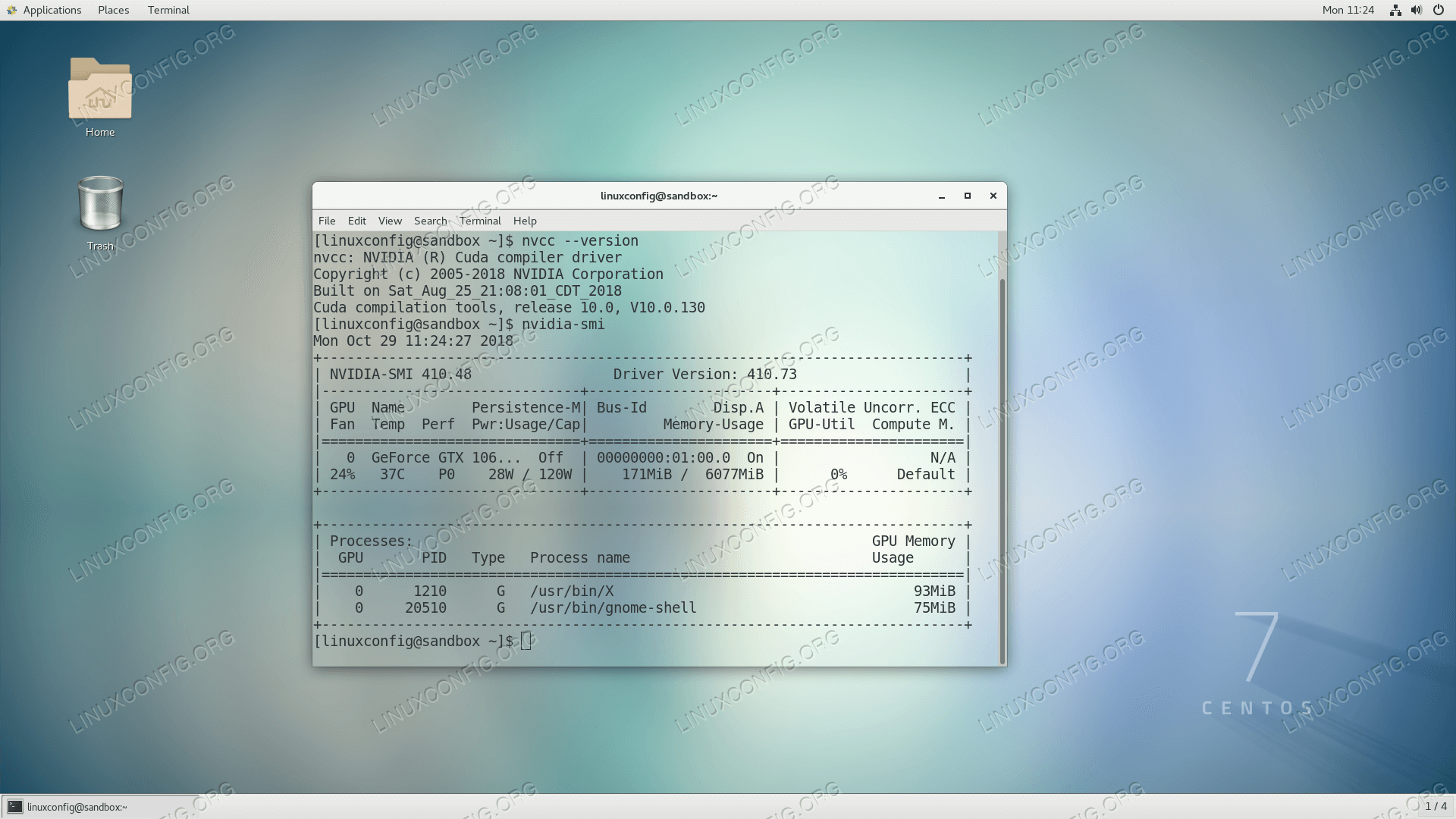

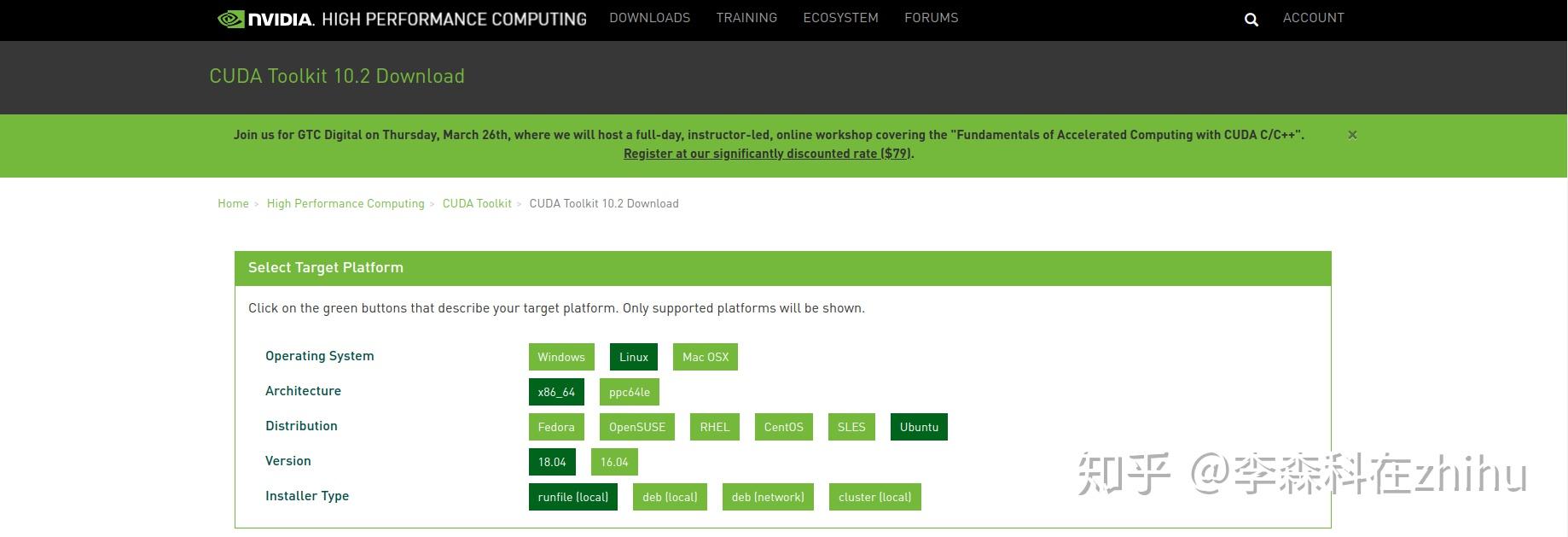

$ sudo apt-get install linux-image-generic linux-image-extra-virtual $ sudo apt-get install libopenblas-dev liblapack-dev $ sudo apt-get install build-essential cmake git unzip pkg-config Installing the CUDA ToolkitĪssuming you have either (1) an EC2 system spun up with GPU support or (2) your own NVIDIA-enabled GPU hardware, the next step is to install the CUDA Toolkit.īut before we can do that, we need to install a few required packages first: $ sudo apt-get update This blog post provides step-by-step instructions (with tons of screenshots) on how to spin up your first EC2 instance and use it for deep learning. Note: Are you new to Amazon AWS and EC2? You might want to read Deep learning on Amazon EC2 GPU with Python and nolearn before continuing. If you’re interested in deep learning, I highly encourage you to setup your own EC2 system using the instructions detailed in this blog post - you’ll be able to use your GPU instance to follow along with future deep learning tutorials on the PyImageSearch blog (and trust me, there will be a lot of them). Insider the remainder of this blog post, I’ll detail how to install the NVIDIA CUDA Toolkit v7.5 along with cuDNN v5 in a g2.2xlarge GPU instance on Amazon EC2. On the other hand, the g2.2xlarge instance is a totally reasonable option, allowing you to forgo your afternoon Starbucks coffee and trade a caffeine jolt for a bit of deep learning fun and education. You can also upgrade to the g2.8xlarge instance ( $2.60 per hour) to obtain four K520 GPUs (for a grand total of 16GB of memory).įor most of us, the g2.8xlarge is a bit expensive, especially if you’re only doing deep learning as a hobby. The GPU included on the system is a K520 with 4GB of memory and 1,536 cores. This instance is named the g2.2xlarge instance and costs approximately $0.65 per hour. How to install CUDA Toolkit and cuDNN for deep learningĪs I mentioned in an earlier blog post, Amazon offers an EC2 instance that provides access to the GPU for computation purposes. Feel free to spin up an instance of your own and follow along.īy the time you’re finished this tutorial, you’ll have a brand new system ready for deep learning. Specifically, I’ll be using an Amazon EC2 g2.2xlarge machine running Ubuntu 14.04. In the remainder of this blog post, I’ll demonstrate how to install both the NVIDIA CUDA Toolkit and the cuDNN library for deep learning. Using the cuDNN package, you can increase training speeds by upwards of 44%, with over 6x speedups in Torch and Caffe. The cuDNN library: A GPU-accelerated library of primitives for deep neural networks.This toolkit includes a compiler specifically designed for NVIDIA GPUs and associated math libraries + optimization routines. The NVIDIA CUDA Toolkit: A development environment for building GPU-accelerated applications.If you already have an NVIDIA supported GPU, then the next logical step is to install two important libraries: And the more GPUs you have, the better off you are. If you’re serious about doing any type of deep learning, you should be utilizing your GPU rather than your CPU. The cufftXtMalloc() API now allocates the correct amount of memory for multi-GPU 2D and 3D plans.Click here to download the source code to this post.The Warp Intrinsics _shfl() function for FP16 data types now has a *_sync equivalent.Strict alias warnings when using GCC to compile code that uses _half data types (cuda_fp16.h) have been disabled.The performance of cudaLaunchCooperativeKernelMultiDevice() APIs has been improved.MPS Server returns an exit status of 1 when it successfully exits.The environment variable for disabling unified memory, CUDA_DISABLE_UNIFIED_MEMORY, is no longer supported.These routines are not supported on GPUs based on the Kepler architecture, namely Tesla K40 or K80. cuBLAS GemmEx routines, namely cublasgemm extensions for mixed precision, are supported only on GPUs based on the Maxwell or later architectures. Use equivalent functionality in the updated fp16 header file from the CUDA toolkit. The built-in functions _float2half_rn() and _half2float() have been removed. Previously, compilation would fail at the host compilation step. An attempt to define a _global_ function in a friend declaration now generates an NVCC diagnostic. Only the RTM version (vc15.0) is fully supported.

Microsoft Visual Studio 2017 (starting with Update 1) support is beta. A code sample for the new CUDA C++ warp matrix functions has been added.For information about enhancements to Volta MPS, see Multi-Process Service () in the NVIDIA GPU Deployment and Management Documentation. CUDA 9 now supports Multi-Process Service (MPS) on Volta GPUs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed